The 19th-Century Fight Against Bacteria-Ridden Milk Preserved With Embalming Fluid

In an unpublished excerpt from her new book The Poison Squad, Deborah Blum chronicles the public health campaign against tainted dairy products

:focal(2700x1791:2701x1792)/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/12/11/1211e469-e066-418f-9fe0-d9a60c3cfce4/j43nnt.jpg)

At the turn of the 20th century, Indiana was widely hailed as a national leader in public health issues. This was almost entirely due to the work of two unusually outspoken scientists.

One was Harvey Washington Wiley, a one-time chemistry professor at Purdue University who had become chief chemist at the federal Department of Agriculture and the country’s leading crusader for food safety. The other was John Newell Hurty, Indiana’s chief public health officer, a sharp-tongued, hygiene-focused — cleanliness “is godliness” — official who was relentlessly determined to reduce disease rates in his home state.

Hurty began his career as a pharmacist, and was hired in 1873 by Col. Eli Lilly as chief chemist for a new drug manufacturing company the colonel was establishing in Indianapolis. In 1884, he became a professor of pharmacy at Purdue, where he developed an interest in public health that led him, in 1896, to become Indiana’s chief health officer. He recognized that many of the plagues of the time — from typhoid to dysentery — were spread by lack of sanitation, and he made it a point to rail against “flies, filth, and dirty fingers.”

By the end of the 19th century, that trio of risks had led Hurty to make the household staple of milk one of his top targets. The notoriously careless habits of the American dairy industry had come to infuriate him, so much so that he’d taken to printing up posters for statewide distribution that featured the tombstones of children killed by “dirty milk.”

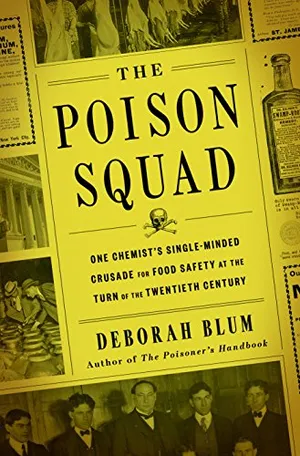

The Poison Squad: One Chemist's Single-Minded Crusade for Food Safety at the Turn of the Twentieth Century

From Pulitzer Prize winner and New York Times-bestselling author Deborah Blum, the dramatic true story of how food was made safe in the United States and the heroes, led by the inimitable Dr. Harvey Washington Wiley, who fought for change.

But although Hurty’s advocacy persuaded Indiana to pass a food safety law in 1899, years before the federal government took action, he and many of his colleagues found that milk — messily adulterated, either teeming with bacteria or preserved with toxic compounds — posed a particularly daunting challenge.

Hurty was far from the first to rant about the sorry quality of milk. In the 1850s, milk sold in New York City was so poor, and the contents of bottles so risky, that one local journalist demanded to know why the police weren’t called on dairymen. In the 1880s, an analysis of milk in New Jersey found the “liquifying colonies [of bacteria]” to be so numerous that the researchers simply abandoned the count.

But there were other factors besides risky strains of bacteria that made 19th century milk untrustworthy. The worst of these were the many tricks that dairymen used to increase their profits. Far too often, not only in Indiana but nationwide, dairy producers thinned milk with water (sometimes containing a little gelatin), and recolored the resulting bluish-gray liquid with dyes, chalk, or plaster dust.

They also faked the look of rich cream by using a yellowish layer of pureed calf brains. As a historian of the Indiana health department wrote: “People could not be induced to eat brain sandwiches in [a] sufficient amount to use all the brains, and so a new market was devised.”

“Surprisingly enough,’’ he added, “it really did look like cream but it coagulated when poured into hot coffee.”

Finally, if the milk was threatening to sour, dairymen added formaldehyde, an embalming compound long used by funeral parlors, to stop the decomposition, also relying on its slightly sweet taste to improve the flavor. In the late 1890s, formaldehyde was so widely used by the dairy and meat-packing industries that outbreaks of illnesses related to the preservative were routinely described by newspapers as “embalmed meat” or “embalmed milk” scandals.

Indianapolis at the time offered a near-perfect case study in all the dangers of milk in America, one that was unfortunately linked to hundreds of deaths and highlighted not only Hurty’s point about sanitation but the often lethal risks of food and drink before federal safety regulations came into place in 1906.

In late 1900, Hurty’s health department published such a blistering analysis of locally produced milk that The Indianapolis News titled its resulting article “Worms and Moss in Milk.” The finding came from an analysis of a pint bottle handed over by a family alarmed by signs that their milk was “wriggling.” It turned out to be worms, which investigators found had been introduced when a local dairyman thinned the milk with ‘‘stagnant water.”

The health department’s official bulletin, published that same summer, also noted the discovery of sticks, hairs, insects, blood, and pus in milk; in addition, the department tracked such a steady diet of manure in dairy products that it estimated that the citizens of Indianapolis consumed more than 2,000 pounds of manure in a given year.

Hurty, who set the sharply pointed tone for his department’s publications, added that “many [child] deaths and sickness” of the time involving severe nausea and diarrhea — a condition sometimes known as “summer complaint” — might instead be traced to a steady supply of filthy milk. “People do not appreciate the danger lurking in milk that isn’t pure,” he wrote after one particularly severe spate of deaths.

The use of formaldehyde was the dairy industry’s solution to official concerns about pathogenic microorganisms in milk. In Hurty’s time, the most dangerous included those carrying bovine tuberculosis, undulant fever, scarlet fever, typhoid, and diphtheria. (Today, public health scientists worry more about pathogens such as E. coli, salmonella, and listeria in untreated or raw milk.)

The heating of a liquid to 120 to 140 degrees Fahrenheit for about 20 minutes to kill pathogenic bacteria was first reported by the French microbiologist Louis Pasteur in the 1850s. But although the process would later be named pasteurization in his honor, Pasteur’s focus was actually on wine. It was more than 20 years later that the German chemist Franz von Soxhlet would propose the same treatment for milk. In 1899, the Harvard microbiologist Theobald Smith — known for his discovery of Salmonella — also argued for this, after showing that pasteurization could kill some of the most stubborn pathogens in milk, such as the bovine tubercle bacillus.

But pasteurization would not become standard procedure in the United States until the 1930s, and even American doctors resisted the idea. The year before Smith announced his discovery, the American Pediatric Society erroneously warned that feeding babies heated milk could lead them to develop scurvy.

Such attitudes encouraged the dairy industry to deal with milk’s bacterial problems simply by dumping formaldehyde into the mix. And although Hurty would later become a passionate advocate of pasteurization, at first he endorsed the idea of chemical preservatives.

In 1896, desperately concerned about diseases linked to pathogens in milk, he even endorsed formaldehyde as a good preservative. The recommended dose of two drops of formalin (a mix of 40 percent formaldehyde and 60 percent water) could preserve a pint of milk for several days. It was a tiny amount, Hurty said, and he thought it might make the product safer.

But the amounts were often far from tiny. Thanks to Hurty, Indiana passed the Pure Food Law in 1899 but the state provided no money for enforcement or testing. So dairymen began increasing the dose of formaldehyde, seeking to keep their product “fresh” for as long as possible. Chemical companies came up with new formaldehyde mixtures with innocuous names such as Iceline or Preservaline. (The latter was said to keep a pint of milk fresh for up to 10 days.) And as the dairy industry increased the amount of preservatives, the milk became more and more toxic.

Hurty was alarmed enough that by 1899, he was urging that formaldehyde use be stopped, citing “increasing knowledge” that the compound could be dangerous even in small doses, especially to children. But the industry did not heed the warning.

In the summer of 1900, The Indianapolis News reported on the deaths of three infants in the city’s orphanage due to formaldehyde poisoning. A further investigation indicated that at least 30 children had died two years prior due to use of the preservative, and in 1901, Hurty himself referenced the deaths of more than 400 children due to a combination of formaldehyde, dirt, and bacteria in milk.

Following that outbreak, the state began prosecuting dairymen for using formaldehyde and, at least briefly, reduced the practice. But it wasn’t until Harvey Wiley and his allies helped secure the federal Pure Food and Drug Act in 1906 that the compound was at last banned from the food supply.

In the meantime, Hurty had become an enthusiastic supporter of pasteurization, which he recognized as both safer and cleaner. When a reporter asked him if he really thought formaldehyde had been all that bad for infants, he replied with his usual directness: “Well, it’s embalming fluid that you are adding to milk. I guess it’s all right if you want to embalm the baby.”

Deborah Blum, a Pulitzer Prize-winning journalist, is director of the Knight Science Journalism program at MIT and publisher of Undark magazine. She is the author of six books, including “The Poisoner’s Handbook” and most recently “The Poison Squad.”

A Note to our Readers

Smithsonian magazine participates in affiliate link advertising programs. If you purchase an item through these links, we receive a commission.