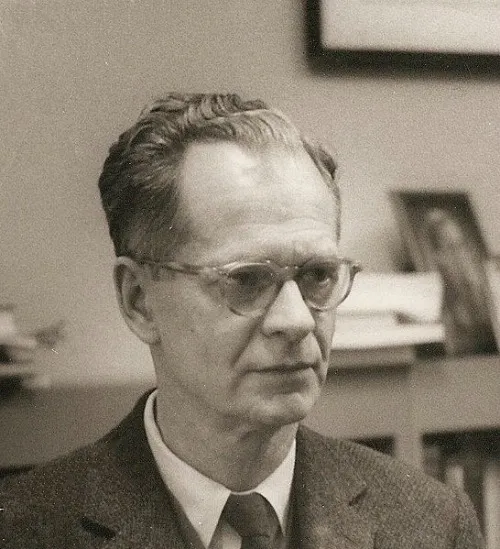

B.F. Skinner: The Man Who Taught Pigeons to Play Ping-Pong and Rats to Pull Levers

One of behavioral psychology’s most famous scientists was also one of the quirkiest

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/20130320100207skinner-thumb.jpg)

B.F Skinner, a leading 20th century psychologist who hypothesized that behavior was caused only by external factors, not by thoughts or emotions, was a controversial figure in a field that tends to attract controversial figures. In a realm of science that has given us Sigmund Freud, Carl Jung and Jean Piaget, Skinner stands out by sheer quirkiness. After all, he is the scientist who trained rats to pull levers and push buttons and taught pigeons to read and play ping-pong.

Besides Freud, Skinner is arguably the most famous psychologist of the 20th century. Today, his work is basic study in introductory psychology classes across the country. But what drives a man to teach his children’s cats to play piano and instruct his beagle on how to play hide and seek? Last year, Norwegian researchers dove into his past to figure it out. The team combed through biographies, archival material and interviews with those who knew him, then tested Skinner on a common personality scale.

They found Skinner, who would be 109 years old today, was highly conscientious, extroverted and somewhat neurotic—a trait shared by as many as 45 percent of leading scientists. The analysis revealed him to be a tireless worker, one who introduced a new approach to behavioral science by building on the theories of Ivan Pavlov and John Watson.

Skinner wasn’t interested in understanding the human mind and its mental processes—his field of study, known as behaviorism, was primarily concerned with observable actions and how they arose from environmental factors. He believed that our actions are shaped by our experience of reward and punishment, an approach that he called operant conditioning. The term “operant” refers to an animal or person “operating” on their environment to affect change while learning a new behavior.

Operant conditioning breaks down a task into increments. If you want to teach a pigeon to turn in a circle to the left, you give it a reward for any small movement it makes in that direction. Soon, the pigeon catches onto this and makes larger movements to the left, which garner more rewards, until the bird completes the full circle. Skinner believed that this type of learning even relates to language and the way we learn to speak. Children are rewarded, through their parents’ verbal encouragement and affection, for making a sound that resembles a certain word until they can actually say that word.

Skinner’s approach introduced a new term into the literature: reinforcement. Behavior that is reinforced, like a mother excitedly drawing out the sounds of “mama” as a baby coos, tends to be repeated, and behavior that’s not reinforced tends to weaken and die out. “Positive” refers to the practice of encouraging a behavior by adding to it, such as rewarding a dog with a treat, and “negative” refers to encouraging a behavior by taking something away. For example, when a driver absentmindedly continues to sit in front of a green light, the driver waiting behind them honks his car horn. The first person is reinforced for moving when the honking stops. The phenomenon of reinforcement extends beyond babies and pigeons: we’re rewarded for going to work each day with a paycheck every two weeks, and likely wouldn’t step inside the office once they were taken away.

Today, the spotlight has shifted from such behavior analysis to cognitive theories, but some of Skinner’s contributions continue to hold water, from teaching dogs to roll over to convincing kids to clean their rooms. Here are a few:

1. The Skinner box. To show how reinforcement works in a controlled environment, Skinner placed a hungry rat into a box that contained a lever. As the rat scurried around inside the box, it would accidentally press the lever, causing a food pellet to drop into the box. After several such runs, the rat quickly learned that upon entering the box, running straight toward the lever and pressing down meant receiving a tasty snack. The rat learned how to use a lever to its benefit in an unpleasant situation too: in another box that administered small electric shocks, pressing the lever caused the unpleasant zapping to stop.

2. Project Pigeon. During World War II, the military invested Skinner’s project to train pigeons to guide missiles through the skies. The psychologist used a device that emitted a clicking noise to train pigeons to peck at a small, moving point underneath a glass screen. Skinner posited that the birds, situated in front of a screen inside of a missile, would see enemy torpedoes as specks on the glass, and rapidly begin pecking at it. Their movements would then be used to steer the missile toward the enemy: Pecks at the center of the screen would direct the rocket to fly straight, while off-center pecks would cause it to tilt and change course. Skinner managed to teach one bird to peck at a spot more than 10,000 times in 45 minutes, but the prospect of pigeon-guided missiles, along with adequate funding, eventually lost luster.

3. The Air-Crib. Skinner tried to mechanize childcare through the use of this “baby box,” which maintained the temperature of a child’s environment. Humorously known as an “heir conditioner,” the crib was completely humidity- and temperate-controlled, a feature Skinner believed would keep his second daughter from getting cold at night and crying. A fan pushed air from the outside through a linen-like surface, adjusting the temperature throughout the night. The air-crib failed commercially, and although his daughter only slept inside at night, many of Skinner’s critics believed it was a cruel and experimental way to raise a child.

4. The teaching box. Skinner believed using his teaching machine to break down material bit by bit, offering rewards along the way for correct responses, could serve almost like a private tutor for students. Material was presented in sequence, and the machine provided hints and suggestions until students verbally explained a response to a problem (Skinner didn’t believe in multiple choice answers). The device wouldn’t allow students to move on in a lesson until they understood the material, and when students got any part of it right, the machine would spit out positive feedback until they reached the solution. The teaching box didn’t stick in a school setting, but many computer-based self-instruction programs today use the same idea.

5. The Verbal Summator. An auditory version of the Rorschach inkblot test, this tool allowed participants to project subconscious thoughts through sound. Skinner quickly abandoned this endeavor as personality assessment didn’t interest him, but the technology spawned several other types of auditory perception tests.

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/marina-koren-240.jpg)

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/marina-koren-240.jpg)