Tracking the Twists and Turns of Hurricanes

Incredibly powerful supercomputers and a willingness to acknowledge that they’re not perfect has made weather scientists become much more effective in forecasting hurricanes.

![]()

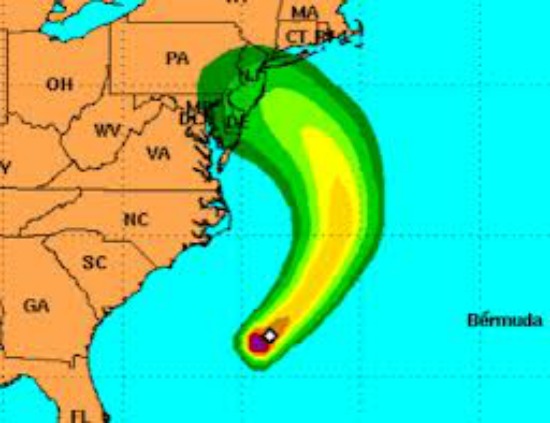

The monster storm cometh. Image courtesy of National Weather Service

I was having one of those moments of modern life disconnect. I looked down and saw on the weather map the massive nasty-looking swirl headed this way. I looked up and saw the gentle flickering of the leaves on the maple tree out back.

It was a strange feeling, sitting in the quiet while gazing at the likely path of destruction and power outage misery Hurricane Sandy will follow over the next few days. But for all the anxiety that brought, it was better to know than not. Everyone on the East Coast has had three whole days to buy batteries and toilet paper.

Probably some people near the ocean who were told to evacuate will say that it wasn’t necessary and will complain about the imprecision of the computer models that drove those decisions. Truth is, though, the science of weather forecasting has become remarkably precise.

As Nate Silver pointed out in the New York Times last month, weather forecasters have become the wizards of the prediction business, far more accurate than political pundits or economic analysts. In his piece, titled “The Weatherman Is Not a Moron,” Silver writes:

“Perhaps the most impressive gains have been in hurricane forecasting. Just 25 years ago, when the National Hurricane Center tried to predict where a hurricane would hit three days in advance of landfall, it missed by an average of 350 miles. If Hurricane Isaac, which made its unpredictable path through the Gulf of Mexico last month, had occurred in the late 1980s, the center might have projected landfall anywhere from Houston to Tallahassee, canceling untold thousands of business deals, flights and picnics in between — and damaging its reputation when the hurricane zeroed in hundreds of miles away. Now the average miss is only about 100 miles.”

A numbers game

So why the dramatic improvement? It comes down to numbers, basically the number of calculations today’s supercomputers are able to do. Take, for instance, a huge computer operation that came online in Wyoming a few weeks ago for the National Center for Atmospheric Research (NCAR). It’s called Yellowstone and it can run an astounding 1.5 quadrillion calculations per second.

Put another way, Yellowstone can finish in nine minutes a short-term weather forecast that would have taken its predecessor three hours to complete. It will be able to significantly narrow the focus of it analysis to a smaller geographical area, taking the typical 60-square-mile unit used in this kind of computer modeling and shrinking it down to seven square miles. That’s like cranking up the magnification of a microscope, providing a level of data detail that makes more precise prediction possible.

Here, according to NCAR, is what it will mean in tracking tornadoes and violent thunderstorms:

“Scientists will be able to simulate these small but dangerous systems in remarkable detail, zooming in on the movement of winds, raindrops, and other features at different points and times within an individual storm. By learning more about the structure and evolution of severe weather, researchers will be able to help forecasters deliver more accurate and specific predictions, such as which locations within a county are most likely to experience a tornado within the next hour.”

Breaking it down

When a supercomputer models weather, it uses millions of numbers that represent such factors as temperature, barometric pressure, wind, etc., and analyzes them through a grid system in many vertical levels, starting at the Earth’s surface and rising all the way up to the stratosphere. The more data points it can process at one time, the more accurately it can gauge how those elements interact and shape weather patterns and movement.

But Nate Silver contends that one of the things that make weather scientists better predictors than their counterparts in other fields is their recognition that neither they nor their numbers are perfect. Not only have they learned to use their personal knowledge of weather patterns to adapt to some of the limitations of computer modeling–it isn’t very good at seeing the big picture or recognizing old patterns if they’ve been even slightly manipulated–but they also have become more willing to publicly acknowledge the uncertainty of their forecasts.

The National Hurricane Center, for instance, no longer shows a single line to represent the expected track of a storm. Now it provides charts displaying a widening swath of color indicating areas at greatest risk, a symbol that’s become known as “the cone of chaos.”

By accepting the flaws in their knowledge, says Silver, weather researchers now understand that “even the most sophisticated computers, combing through seemingly limitless data, are painfully ill equipped to predict something as dynamic as weather all by themselves.”

Meanwhile, back here in the cone of chaos, it’s time to start practicing reading by flashlight.

Extreme measures

Here are other recent developments related to technology and extreme weather:

- What we don’t need to hear: Due to mismanagement and lack of financing, the U.S. is likely to have a gap in satellite coverage in the near future, meaning it would be without one of the key tools it uses in tracking the path of storms.

- Things that go bump in the night: New smart radar systems on airplanes will make it easier for pilots to locate and avoid violent thunderstorms.

- Definitely not a place to get stuck:China has started trial runs of the world’s first high-speed, high-altitude railway line built to withstand temperatures as low as 40 below zero.

Video bonus: Here’s the latest from the Weather Channel on the track of Hurricane Sandy.

More from Smithsonian.com

Three Quarters of Americans Now Believe Climate Change is Affecting the Weather

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/randy-rieland-240.png)

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/randy-rieland-240.png)