Why Holograms Will Probably Never Be as Cool as They Were in “Star Wars”

But those that do exist must be preserved and archived

:focal(663x206:664x207)/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/e6/47/e647f40c-3909-4177-b309-4de924d0ef09/princess-leia-hologram.jpg)

Stereoscopes entertained every Victorian home with their ability to produce three-dimensional pictures. Typewriters and later fax machines were once essential for business practices. Photo printers and video rentals came and went from high streets.

When innovative technologies like these come to the end of their lives, we have various ways of remembering them. It might be through rediscovery – hipster subculture popularizing retro technologies like valve radios or vinyl, for example. Or it might be by fitting the technology into a narrative of progress, such as the way we laugh at the brick-sized mobile phones of 30 years ago next to the sleek smartphones of today.

These stories sometimes simplify reality but they have their uses: they let companies align themselves with continual improvement and justify planned obsolescence. Even museums of science and technology tend to chronicle advances rather than document dead-ends or unachieved hopes.

But some technologies are more problematic: their expectations have failed to materialize, or have retreated into an indefinite future. Sir Clive Sinclair’s C5 electric trike was a good example. Invisible in traffic, exposed to weather and excluded from pedestrian and cycle spaces, it satisfied no one. It has not been revived as retro-tech, and fits uncomfortably into a story of transport improvement. We risk forgetting it altogether.

When we are talking about a single product like the C5, that is one thing. But in some cases we are talking about a whole genre of innovation. Take the hologram, for instance.

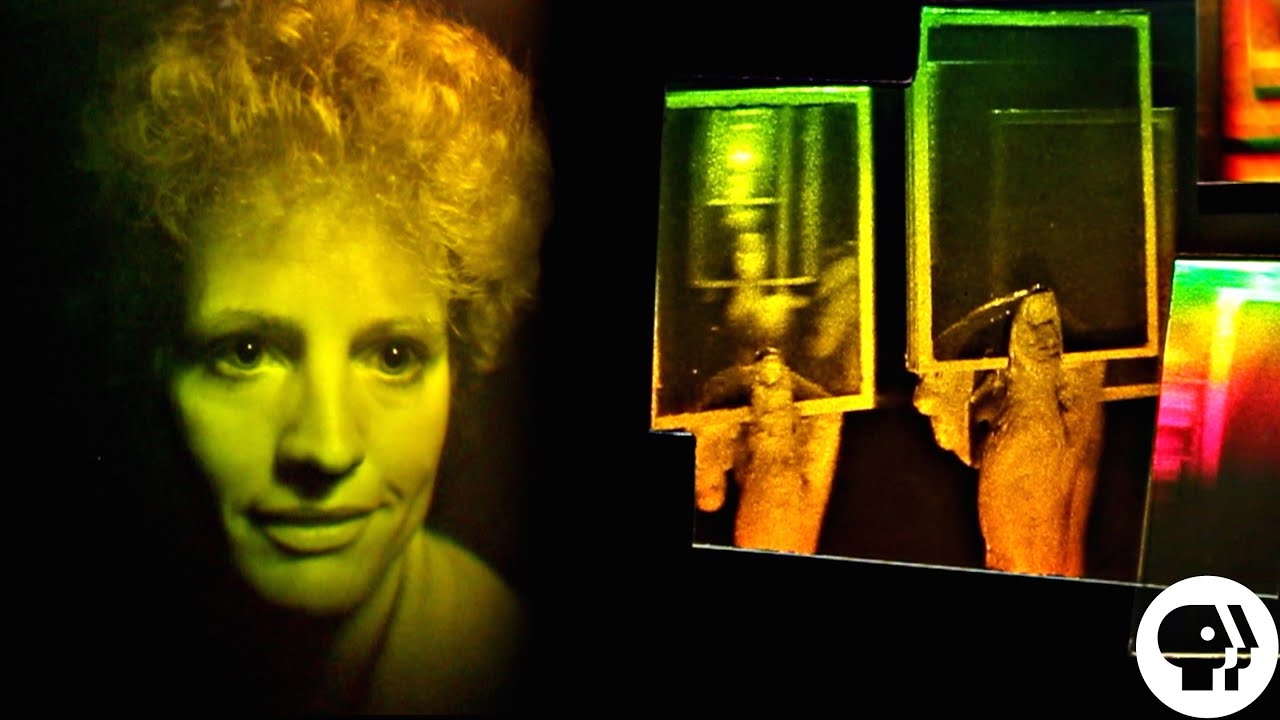

The hologram was conceived by Hungarian engineer Dennis Gabor some 70 years ago. It was breathlessly reported in the media from the early 1960s, winning Gabor the Nobel Prize in Physics in 1971, and hologram exhibitions attracted audiences of tens of thousands during the 1980s. Today, tens of millions of people have heard of them, but mostly through science fiction, computer gaming or social media. None of those representations bear much resemblance to the real thing.

When I first began researching the history of the field, my raw materials were mostly typical fodder for historians: unpublished documents and interviews. I had to hunt for them in neglected boxes in the homes, garages and memories of retired engineers, artists and entrepreneurs. The companies, universities and research labs that had once kept the relevant records and equipment had often lost track of them. The reasons were not difficult to trace.

The future that never came

Holography had been conceived by Gabor as an improvement for electron microscopes, but after a decade its British developers publicly dubbed it an impractical white elephant. At the same time, American and Soviet researchers were quietly developing a Cold War application: bypassing inadequate electronic computers by holographic image processing showed good potential, but it could not be publicly acknowledged.

Instead, the engineering industry publicized the technology as “lensless 3D photography” in the 1960s, predicting that traditional photography would be replaced and that holographic television and home movies were imminent. Companies and government-sponsored labs pitched in, eager to explore the rich potential of the field, generating 1,000 PhDs, 7,000 patents and 20,000 papers. But by the end of the decade, none of these applications were any closer to materializing.

From the 1970s, artists and artisans began taking up holograms as an art form and home attraction, leading to a wave of public exhibitions and a cottage industry. Entrepreneurs flocked to the field, attracted by expectations of guaranteed progress and profits. Physicist Stephen Benton of Polaroid Corporation and later MIT expressed his faith: “A satisfying and effective three-dimensional image”, he said, “is not a technological speculation, it is a historical inevitability”.

Not much had emerged a decade later, though unexpected new potential niches sprang up. Holograms were touted for magazine illustrations and billboards, for instance. And finally there was a commercial success – holographic security patches on credit cards and bank notes.

Ultimately, however, this is a story of failed endeavour. Holography has not replaced photography. Holograms do not dominate advertising or home entertainment. There is no way of generating a holographic image that behaves like the image of Princess Leia projected by R2-D2 in Star Wars, or Star Trek’s holographic doctor. So pervasive are cultural expectations even now that it is almost obligatory to follow such statements with “… yet”.

Preserving disappointment

Holography is a field of innovation where art, science, popular culture, consumerism and cultural confidences intermingled; and was shaped as much by its audiences as by its creators. Yet it doesn’t fit the kind of stories of progress that we tend to tell. You could say the same about 3D cinema and television or the health benefits of radioactivity, for example.

When a technology does not deliver its potential, museums are less interested in holding exhibitions; universities and other institutions less interested in devoting space to collections. When the people who keep them in their garages die, they are likely to end up in landfill. As the Malian writer Amadou Hampâté Bâ observed: “When an old person dies, a library burns”. Yet it is important we remember these endeavors.

Technologies like holograms were created and consumed by an exceptional range of social groups, from classified scientists to countercultural explorers. Most lived that technological faith, and many gained insights from sharing frustrating or secret experiences of innovation.

It gets left to us historians to hold these stories of unsuccessful fields together, and arguably that’s not sufficient. By remembering our endeavours with holograms or 3D cinema or radioactive therapy we may help future generations understand how technologies make society tick. For that vital reason, preserving them needs to be more of a priority.

This article was originally published on The Conversation. Read the original article.

Sean Johnston is a Professor of Science, Technology and Society, University of Glasgow.

!["Help Me, Obi-Wan Kenobi. You're My Only Hope" - A New Hope [1080p HD]](https://i.ytimg.com/vi/pUaxXsqGeFI/maxresdefault.jpg)