This AI Bot Fights Workplace Harassment

A new app, Spot, uses AI to help harassment and discrimination victims create and file reports without having to talk to a human

:focal(1080x595:1081x596)/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/0e/18/0e1853c0-060c-4c31-ae09-c580a335b0df/metoo.jpg)

If it wasn’t clear before, it ought to be crystal clear now: workplace harassment and discrimination are problems that affect all industries, from Hollywood to politics to technology.

And it’s also clear that simply reporting incidents of harassment or discrimination to HR does not necessarily get results. Victims are often ignored, disbelieved, or even retaliated against.

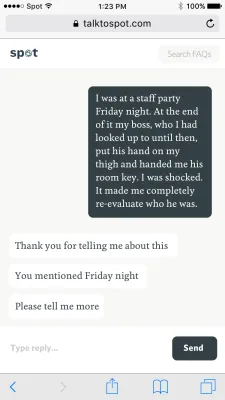

The founders of a new app for reporting harassment and discrimination think artificial intelligence might help. Their free app, Spot, uses an AI-based chatbot to interview victims and create detailed reports that can then be sent, anonymously or not, to higher-ups.

“Spot acts as a perfect memory interviewer and asks all the right questions to make sure you don’t miss anything,” says Julia Shaw, one of Spot’s co-founders.

Shaw is a psychologist at University College London who specializes in memory and criminal psychology. She’s studied police interviews and understands how difficult it can be to report highly emotional events in detail. She also knows how interviewer bias can negatively impact reporting.

“A perfect memory interviewer is calm, is neutral, and makes sure that they don’t ask leading questions,” she says. “The problem is, it’s quite difficult to train people, and to train people to actually stick to the script. People are easily led astray and distracted.”

A bot, Shaw figured, might be better than a human to take a harassment report because a bot could be completely neutral and non-judgmental. Spot uses evidence-based cognitive interviewing techniques to elicit detail without leading or inserting bias. Accessibility is another advantage of a bot over a human. After an incident, an employee can immediately create a Spot report, rather than waiting hours or days for an HR appointment. This is critical, as memories degrade rapidly. And Spot is trained to extract the kinds of details known to be important, while not all HR professionals know what to ask.

“Often people forget, for example, to mention exactly where or when something happens,” Shaw says. “Or maybe there were witnesses there they forgot. A bot really prompts people to think through all the pieces that might be relevant to making their case stronger.”

Spot uses a messenger-style chat to solicit details from the reporter. It then creates a time-stamped document of the report, to be stored in an encrypted space, which the reporter can choose to file or not. If they do file the report, they have the option of doing so anonymously.

“In concept, the idea of an AI-driven interviewer to standardize and fully anonymize abuse reporting is great,” says Jonathan Kunstman, a psychology professor at Miami University in Ohio who studies harassment and discrimination.

But, Kunstman cautions, technology can only go so far. “Without organizational will and support, even the best technology won’t correct these problems,” he says.

Companies have to be truly motivated to deal with their internal problems and willing to look at the organizational culture that might have given rise to such behaviors, Kunstman says.

Shaw acknowledges that Spot’s power is limited. “We’re not trying to change the world, we’re trying to change reporting,” she says. “And hopefully the knock-on effect of this is people in HR effectively deal with these problems, but that’s ultimately out of our hands.”

The next step for Shaw and her co-founders Dylan Marriott and Daniel Nicolae, who met at the San Francisco AI startup studio All Turtles, is to create a program for employers to track, manage and analyze harassment and discrimination reports. They hope the current cultural reckoning with issues of harassment will spur employers to take such problems seriously.

“I think the #metoo movement and the widespread coming forward of instances of harassment and discrimination at work is going to make people realize this is a problem, possibly a systemic problem,” Shaw says. “Just because you haven’t heard of it happening in your company doesn’t mean it doesn’t happen.”

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/matchar.png)

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/matchar.png)