How the First Man-Made Nuclear Reactor Reshaped Science and Society

In December 1942, Chicago Pile-1 ushered in an age of frightening possibility

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/5c/74/5c7461ba-6ccd-4a4d-a6bd-849a9da3a18d/nukes4.jpg)

It was 75 years ago, beneath the bleachers of a University of Chicago football field, that scientists took the first step toward harnessing the power of the nuclear fission chain reaction. Their research initiated the Atomic Age, and kicked off in earnest the Manhattan Project’s race toward a weapon of unimaginable might. Later, precisely the same technique would spur construction of the nuclear power plants that today supply 20 percent of America’s energy. From medicine to art, the awesome and terrible potential of splitting the atom has left few aspects of our lives untouched.

The story begins in late 1938, when the work of chemists Otto Hahn, Fritz Strassman and Lise Meitner led to the discovery that the atom—whose very name derives from the Greek for “indivisible”—could in fact be split apart. In remote collaboration with Meitner, a Jewish refugee from Nazi Germany who had settled in Stockholm, Sweden, Hahn and Strassman bombarded large, unstable uranium atoms with tiny neutrons at the University of Berlin. To their surprise, they found that the process could produce barium, an element much lighter than uranium. This revealed that it was possible to split the uranium nuclei into less massive, chemically distinct components.

The trio of researchers knew instantly that they were onto something major. Changing the very identity of an element was once the fancy of alchemists: now, it was scientific reality. Yet at the time, they had only an inkling of the many scientific and cultural revolutions their discovery would spark.

Theoretical work undertaken by Meitner and her nephew Otto Frisch quickly expanded on this initial finding—a paper published in Nature in January 1939 outlined not only the mechanics of fission but also its astonishing energy output. As heavy uranium nuclei burst, transitioning from unstable high-energy states to stable low-energy states, they released enormous amounts of energy. What’s more, the cleft atoms spat out stray neutrons which were themselves capable of triggering fission in other nearby nuclei.

After an American team at Columbia University promptly replicated the Berlin result, it was clear that the power of atom-splitting was no joke. Given the fraught geopolitical climate of the time, the rush to capitalize on this new technology took on tremendous significance. The world itself resembled an unstable atom on the brink of self-destruction. In the United States, President Franklin Roosevelt was growing increasingly concerned with the ascent of charismatic tyrants overseas.

For some chemists and physicists, the situation felt even more dire. “Scientists, some of whom [including Albert Einstein, and the Hungarian physicist Leo Szilárd] were refugees from fascist Europe, knew what was possible,” says University of Chicago physics professor Eric Isaacs. “They knew Adolf Hitler. And with their colleagues and their peers here in America, they very quickly realized that now that we had fission, it would certainly be possible to use that energy in nefarious ways.”

Particularly frightening was the possibility of stringing together a chain of fission reactions to generate enough energy to bring about real destruction. In August of 1939, this concern prompted Einstein and Szilárd to meet and draft a letter to Roosevelt, alerting him to the danger of Germany creating a nuclear bomb and exhorting him to begin a program of intensive domestic research in the U.S. Einstein, who like Lise Meitner had abandoned his professorship in Germany as anti-Semitic sentiment was taking hold, endorsed the grave message, ensuring that it would leave a deep impression on the president.

One month later, Hitler’s army marched into Poland, igniting World War II. As Isaacs describes, a reluctant Roosevelt soon came around to Szilárd’s way of thinking, and saw the need for the Allies to beat Germany to a nuclear weapon. To achieve that end, he formally enlisted the aid of a committed, supremely talented group of nuclear researchers. “I have convened a board,” Roosevelt wrote in a follow-up letter to Einstein, “to thoroughly investigate the possibilities of your suggestion regarding the element of uranium.”

“Einstein’s letter took a little while to settle in,” Isaacs says, “but once it did, the funding started. And Arthur Holly Compton, who was the head of the University of Chicago physics department, was able to collect a dream team of scientists—chemists, physicists, metallurgists—all here at the university by 1941. Including Enrico Fermi, including Szilárd. Right here on campus. And that’s where they did the experiment.”

The dream team’s goal was to produce a self-sustaining series of fission events in a controlled environment: in other words, a nuclear chain reaction. Hahn and Strassman had observed fission in a few isolated atoms. Now Compton, Fermi and Szilárd wanted to string together billions of fissions, with the neutrons released by one reaction triggering the next several. The effect would grow exponentially, and so too would its energy output.

To perform the experiment, they would have to create the world’s first man-made nuclear reactor, a boxy apparatus of graphite bricks and wood about 60 feet in length and 30 feet wide and tall. Within the device, cadmium control rods soaked up excess neutrons from the fission reactions, preventing a catastrophic loss of control. In its niche beneath the stands at the university’s Stagg Field, the reactor—blueprinted and fabricated within the span of a single month—successfully induced a nuclear chain reaction, and drew on it to generate power.

The work of the Chicago all-star science team constituted the critical first step toward the Manhattan Project’s goal of developing a nuclear bomb before the Axis. That goal would be realized in 1945, when the United States dropped atomic bombs over Hiroshima and Nagasaki, bringing a deadly and provocative end to the war. (“Woe is me,” Einstein is reported to have said upon hearing the news.) And yet, the breakthrough of Chicago Pile-1, nicknamed CP-1, represented more than a step towards greater military might for the U.S. It demonstrated humanity’s capacity to tap into the very hearts of atoms for fuel.

One of the most obvious legacies of the CP-1 experiment is the growth of the nuclear power industry, which physicist Enrico Fermi was instrumental in kickstarting after his time with the covert Chicago research outfit. “Fermi really had no interest in weapons in the long run,” says Isaacs. “He did of course work on the Manhattan Project, and he was totally dedicated—but when the war was over, he continued to build reactors, with the idea that they would be used for civilian use, for power generation.”

Isaacs notes that the controlled fission demonstrated with CP-1 also paved the way for the incorporation of nuclear technology into medicine (think x-rays, CT scans, and other diagnostic tools, as well as cancer therapies) and agriculture (Isaacs cites as one example an ongoing effort to genetically diversify bananas through tactical irradiation of their genes). Yet one of the largest-scale impacts of CP-1 was on the practice of science itself.

“If you think about what happened just following the war,” Isaacs says, “some of the first things that were created were the federal agencies that fund research in this country: the Atomic Energy Commission, which is now called the Department of Energy, and years later, the National Institutes of Health and National Science Foundation.” These agencies came into being after the success of CP-1 and the Manhattan Project more broadly paved the way for a renewed public faith in science and technology.

Prestige “dream team” scientific collaboration also rose to prominence as a result of the CP-1 effort. Isaacs sees present-day intercollegiate cancer research, for example, as the natural extension of the Manhattan Project model: bring the brightest minds from across the country together and let the magic happen. Thanks to the internet, modern researchers often share data and hypotheses digitally instead of physically, but the rapid-fire, goal-oriented ideation and prototyping of the Chicago Pile-1 days is very much alive and well.

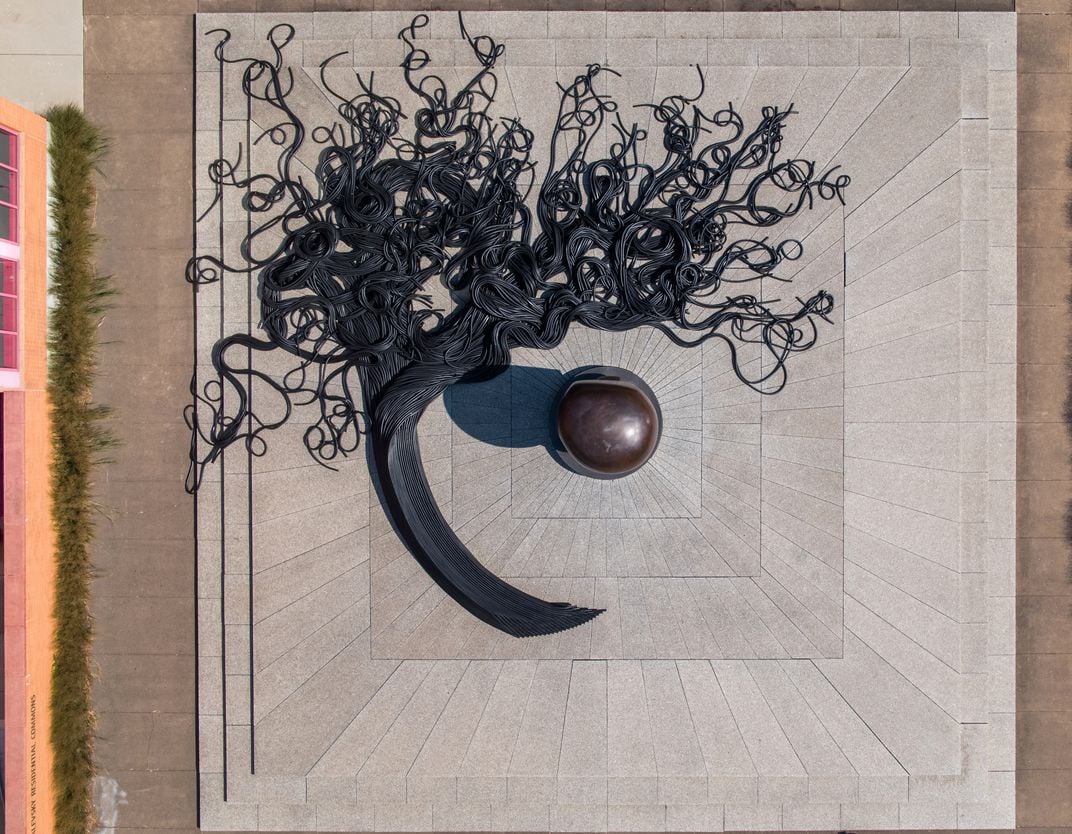

Stagg Field was closed in 1957, the bleachers that once sheltered the world’s first artificial nuclear reactor summarily torn down. The site is now a humble gray quadrangle, encircled by university research facilities and libraries. At the heart of this open space, a stark bronze sculpture with a rounded carapace memorializes the atomic breakthroughs. Its shape could be interpreted either as a protective shield or the crest of a mushroom cloud. Titled “Nuclear Energy,” the piece was specially commissioned from abstract sculptor Henry Moore.

“Is it dissolving,” University of Chicago art history chair Christine Mehring asks of Moore’s cryptic sculpture, “or is it evolving?” In the nuclear world we now occupy, into which we were delivered those 75 years ago, such questions seem fated to haunt us forever.

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/DSC_02399_copy.jpg)

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/accounts/headshot/DSC_02399_copy.jpg)