How Do Our Brains Process Music?

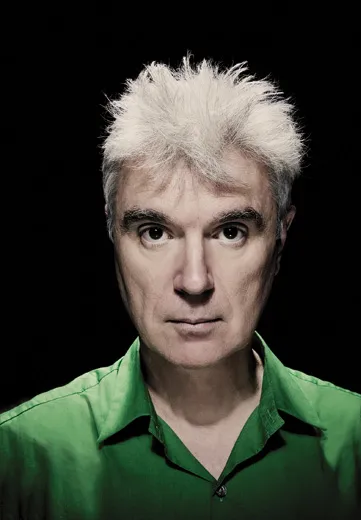

In an excerpt from his new book, David Byrne explains why sometimes, he prefers hearing nothing

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/Music-Works-David-Byrne-631.jpg)

I listen to music only at very specific times. When I go out to hear it live, most obviously. When I’m cooking or doing the dishes I put on music, and sometimes other people are present. When I’m jogging or cycling to and from work down New York’s West Side Highway bike path, or if I’m in a rented car on the rare occasions I have to drive somewhere, I listen alone. And when I’m writing and recording music, I listen to what I’m working on. But that’s it.

I find music somewhat intrusive in restaurants or bars. Maybe due to my involvement with it, I feel I have to either listen intently or tune it out. Mostly I tune it out; I often don’t even notice if a Talking Heads song is playing in most public places. Sadly, most music then becomes (for me) an annoying sonic layer that just adds to the background noise.

As music becomes less of a thing—a cylinder, a cassette, a disc—and more ephemeral, perhaps we will start to assign an increasing value to live performances again. After years of hoarding LPs and CDs, I have to admit I’m now getting rid of them. I occasionally pop a CD into a player, but I’ve pretty much completely converted to listening to MP3s either on my computer or, gulp, my phone! For me, music is becoming dematerialized, a state that is more truthful to its nature, I suspect. Technology has brought us full circle.

I go to at least one live performance a week, sometimes with friends, sometimes alone. There are other people there. Often there is beer, too. After more than a hundred years of technological innovation, the digitization of music has inadvertently had the effect of emphasizing its social function. Not only do we still give friends copies of music that excites us, but increasingly we have come to value the social aspect of a live performance more than we used to. Music technology in some ways appears to have been on a trajectory in which the end result is that it will destroy and devalue itself. It will succeed completely when it self-destructs. The technology is useful and convenient, but it has, in the end, reduced its own value and increased the value of the things it has never been able to capture or reproduce.

Technology has altered the way music sounds, how it’s composed and how we experience it. It has also flooded the world with music. The world is awash with (mostly) recorded sounds. We used to have to pay for music or make it ourselves; playing, hearing and experiencing it was exceptional, a rare and special experience. Now hearing it is ubiquitous, and silence is the rarity that we pay for and savor.

Does our enjoyment of music—our ability to find a sequence of sounds emotionally affecting—have some neurological basis? From an evolutionary standpoint, does enjoying music provide any advantage? Is music of any truly practical use, or is it simply baggage that got carried along as we evolved other more obviously useful adaptations? Paleontologist Stephen Jay Gould and biologist Richard Lewontin wrote a paper in 1979 claiming that some of our skills and abilities might be like spandrels—the architectural negative spaces above the curve of the arches of buildings—details that weren’t originally designed as autonomous entities, but that came into being as a result of other, more practical elements around them.

Dale Purves, a professor at Duke University, studied this question with his colleagues David Schwartz and Catherine Howe, and they think they might have some answers. They discovered that the sonic range that matters and interests us the most is identical to the range of sounds we ourselves produce. Our ears and our brains have evolved to catch subtle nuances mainly within that range, and we hear less, or often nothing at all, outside of it. We can’t hear what bats hear, or the subharmonic sound that whales use. For the most part, music also falls into the range of what we can hear. Though some of the harmonics that give voices and instruments their characteristic sounds are beyond our hearing range, the effects they produce are not. The part of our brain that analyzes sounds in those musical frequencies that overlap with the sounds we ourselves make is larger and more developed—just as the visual analysis of faces is a specialty of another highly developed part of the brain.

The Purves group also added to this the assumption that periodic sounds— sounds that repeat regularly—are generally indicative of living things, and are therefore more interesting to us. A sound that occurs over and over could be something to be wary of, or it could lead to a friend, or a source of food or water. We can see how these parameters and regions of interest narrow down toward an area of sounds similar to what we call music. Purves surmised that it would seem natural that human speech therefore influenced the evolution of the human auditory system as well as the part of the brain that processes those audio signals. Our vocalizations, and our ability to perceive their nuances and subtlety, co-evolved.

In a UCLA study, neurologists Istvan Molnar-Szakacs and Katie Overy watched brain scans to see which neurons fired while people and monkeys observed other people and monkeys perform specific actions or experience specific emotions. They determined that a set of neurons in the observer “mirrors” what they saw happening in the observed. If you are watching an athlete, for example, the neurons that are associated with the same muscles the athlete is using will fire. Our muscles don’t move, and sadly there’s no virtual workout or health benefit from watching other people exert themselves, but the neurons do act as if we are mimicking the observed. This mirror effect goes for emotional signals as well. When we see someone frown or smile, the neurons associated with those facial muscles will fire. But—and here’s the significant part—the emotional neurons associated with those feelings fire as well. Visual and auditory clues trigger empathetic neurons. Corny but true: If you smile you will make other people happy. We feel what the other is feeling—maybe not as strongly, or as profoundly—but empathy seems to be built into our neurology. It has been proposed that this shared representation (as neuroscientists call it) is essential for any type of communication. The ability to experience a shared representation is how we know what the other person is getting at, what they’re talking about. If we didn’t have this means of sharing common references, we wouldn’t be able to communicate.

It’s sort of stupidly obvious—of course we feel what others are feeling, at least to some extent. If we didn’t, then why would we ever cry at the movies or smile when we heard a love song? The border between what you feel and what I feel is porous. That we are social animals is deeply ingrained and makes us what we are. We think of ourselves as individuals, but to some extent we are not; our very cells are joined to the group by these evolved empathic reactions to others. This mirroring isn’t just emotional, it’s social and physical, too. When someone gets hurt we “feel” their pain, though we don’t collapse in agony. And when a singer throws back his head and lets loose, we understand that as well. We have an interior image of what he is going through when his body assumes that shape.

We anthropomorphize abstract sounds, too. We can read emotions when we hear someone’s footsteps. Simple feelings—sadness, happiness and anger—are pretty easily detected. Footsteps might seem an obvious example, but it shows that we connect all sorts of sounds to our assumptions about what emotion, feeling or sensation generated that sound.

The UCLA study proposed that our appreciation and feeling for music are deeply dependent on mirror neurons. When you watch, or even just hear, someone play an instrument, the neurons associated with the muscles required to play that instrument fire. Listening to a piano, we “feel” those hand and arm movements, and as any air guitarist will tell you, when you hear or see a scorching solo, you are “playing” it, too. Do you have to know how to play the piano to be able to mirror a piano player? Edward W. Large at Florida Atlantic University scanned the brains of people with and without music experience as they listened to Chopin. As you might guess, the mirror neuron system lit up in the musicians who were tested, but somewhat surprisingly, it flashed in non-musicians as well. So, playing air guitar isn’t as weird as it sometimes seems. The UCLA group contends that all of our means of communication—auditory, musical, linguistic, visual—have motor and muscular activities at their root. By reading and intuiting the intentions behind those motor activities, we connect with the underlying emotions. Our physical state and our emotional state are inseparable—by perceiving one, an observer can deduce the other.

People dance to music as well, and neurological mirroring might explain why hearing rhythmic music inspires us to move, and to move in very specific ways. Music, more than many of the arts, triggers a whole host of neurons. Multiple regions of the brain fire upon hearing music: muscular, auditory, visual, linguistic. That’s why some folks who have completely lost their language abilities can still articulate a text when it is sung. Oliver Sacks wrote about a brain-damaged man who discovered that he could sing his way through his mundane daily routines, and only by doing so could he remember how to complete simple tasks like getting dressed. Melodic intonation therapy is the name for a group of therapeutic techniques that were based on this discovery.

Mirror neurons are also predictive. When we observe an action, posture, gesture or a facial expression, we have a good idea, based on our past experience, what is coming next. Some on the Asperger spectrum might not intuit all those meanings as easily as others, and I’m sure I’m not alone in having been accused of missing what friends thought were obvious cues or signals. But most folks catch at least a large percentage of them. Maybe our innate love of narrative has some predictive, neurological basis; we have developed the ability to be able to feel where a story might be going. Ditto with a melody. We might sense the emotionally resonant rise and fall of a melody, a repetition, a musical build, and we have expectations, based on experience, about where those actions are leading—expectations that will be confirmed or slightly redirected depending on the composer or performer. As cognitive scientist Daniel Levitin points out, too much confirmation—when something happens exactly as it did before—causes us to get bored and to tune out. Little variations keep us alert, as well as serving to draw attention to musical moments that are critical to the narrative.

Music does so many things to us that one can’t simply say, as many do, “Oh, I love all kinds of music.” Really? But some forms of music are diametrically opposed to one another! You can’t love them all. Not all the time, anyway.

In 1969, Unesco passed a resolution outlining a human right that doesn’t get talked about much—the right to silence. I think they’re referring to what happens if a noisy factory gets built beside your house, or a shooting range, or if a disco opens downstairs. They don’t mean you can demand that a restaurant turn off the classic rock tunes it’s playing, or that you can muzzle the guy next to you on the train yelling into his cellphone. It’s a nice thought though—despite our innate dread of absolute silence, we should have the right to take an occasional aural break, to experience, however briefly, a moment or two of sonic fresh air. To have a meditative moment, a head-clearing space, is a nice idea for a human right.

John Cage wrote a book called, somewhat ironically, Silence. Ironic because he was increasingly becoming notorious for noise and chaos in his compositions. He once claimed that silence doesn’t exist for us. In a quest to experience it, he went into an anechoic chamber, a room isolated from all outside sounds, with walls designed to inhibit the reflection of sounds. A dead space, acoustically. After a few moments he heard a thumping and whooshing, and was informed those sounds were his own heartbeat and the sound of his blood rushing through his veins and arteries. They were louder than he might have expected, but okay. After a while, he heard another sound, a high whine, and was informed that this was his nervous system. He realized then that for human beings there was no such thing as true silence, and this anecdote became a way of explaining that he decided that rather than fighting to shut out the sounds of the world, to compartmentalize music as something outside of the noisy, uncontrollable world of sounds, he’d let them in: “Let sounds be themselves rather than vehicles for manmade theories or expressions of human sentiments.” Conceptually at least, the entire world now became music.

If music is inherent in all things and places, then why not let music play itself? The composer, in the traditional sense, might no longer be necessary. Let the planets and spheres spin. Musician Bernie Krause has just come out with a book about “biophony”—the world of music and sounds made by animals, insects and the nonhuman environment. Music made by self-organizing systems means that anyone or anything can make it, and anyone can walk away from it. John Cage said the contemporary composer “resembles the maker of a camera who allows someone else to take the picture.” That’s sort of the elimination of authorship, at least in the accepted sense. He felt that traditional music, with its scores that instruct which note should be played and when, are not reflections of the processes and algorithms that activate and create the world around us. The world indeed offers us restricted possibilities and opportunities, but there are always options, and more than one way for things to turn out. He and others wondered if maybe music might partake of this emergent process.

A small device made in China takes this idea one step further. The Buddha Machine is a music player that uses random algorithms to organize a series of soothing tones and thereby create never-ending, non-repeating melodies. The programmer who made the device and organized its sounds replaces the composer, effectively leaving no performer. The composer, the instrument and the performer are all one machine. These are not very sophisticated devices, though one can envision a day when all types of music might be machine-generated. The basic, commonly used patterns that occur in various genres could become the algorithms that guide the manufacture of sounds. One might view much of corporate pop and hip-hop as being machine-made—their formulas are well established, and one need only choose from a variety of available hooks and beats, and an endless recombinant stream of radio-friendly music emerges. Though this industrial approach is often frowned on, its machine-made nature could just as well be a compliment—it returns musical authorship to the ether. All these developments imply that we’ve come full circle: We’ve returned to the idea that our universe might be permeated with music.

I welcome the liberation of music from the prison of melody, rigid structure and harmony. Why not? But I also listen to music that does adhere to those guidelines. Listening to the Music of the Spheres might be glorious, but I crave a concise song now and then, a narrative or a snapshot more than a whole universe. I can enjoy a movie or read a book in which nothing much happens, but I’m deeply conservative as well—if a song establishes itself within the pop genre, then I listen with certain expectations. I can become bored more easily by a pop song that doesn’t play by its own rules than by a contemporary composition that is repetitive and static. I like a good story and I also like staring at the sea—do I have to choose between the two?

Excerpted from How Music Works by David Byrne, published by McSweeney's Books, © 2012 by Todo Mundo Ltd.