Can a Video Game Train You To Hear Better In a Crowded Room?

A new study finds it’s possible to teach the brain to better distinguish between speech and background noise

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/dd/4d/dd4df784-3c49-4152-931d-a827c7a6d49b/file-20180119-110117-rsu0ky.jpg)

Roughly 15 percent of Americans report some sort of hearing difficulty; trouble understanding conversations in noisy environments is one of the most common complaints. Unfortunately, there’s not much doctors or audiologists can do. Hearing aids can amplify things for ears that can’t quite pick up certain sounds, but they don’t distinguish between the voice of a friend at a party and the music in the background. The problem is not only one of technology, but also of brain wiring.

Most hearing aid users say that even with their hearing aids, they still have difficulty communicating in noisy environments. As a neuroscientist who studies speech perception, this issue is prominent in much of my own research, as well as that of many others. The reason isn’t that they can’t hear the sounds; it’s that their brains can’t pick out the conversation from the background chatter.

Harvard neuroscientists Dan Polley and Jonathon Whitton may have found a solution, by harnessing the brain’s incredible ability to learn and change itself. They have discovered that it may be possible for the brain to relearn how to distinguish between speech and noise. And the key to learning that skill could be a video game.

The hearing brain

People with hearing aids often report being frustrated with how their hearing aids handle noisy situations; it’s a key reason many people with hearing loss don’t wear hearing aids, even if they own them. People with untreated hearing loss – including those who don’t wear their hearing aids – are at increased risk of social isolation, depression and even dementia.

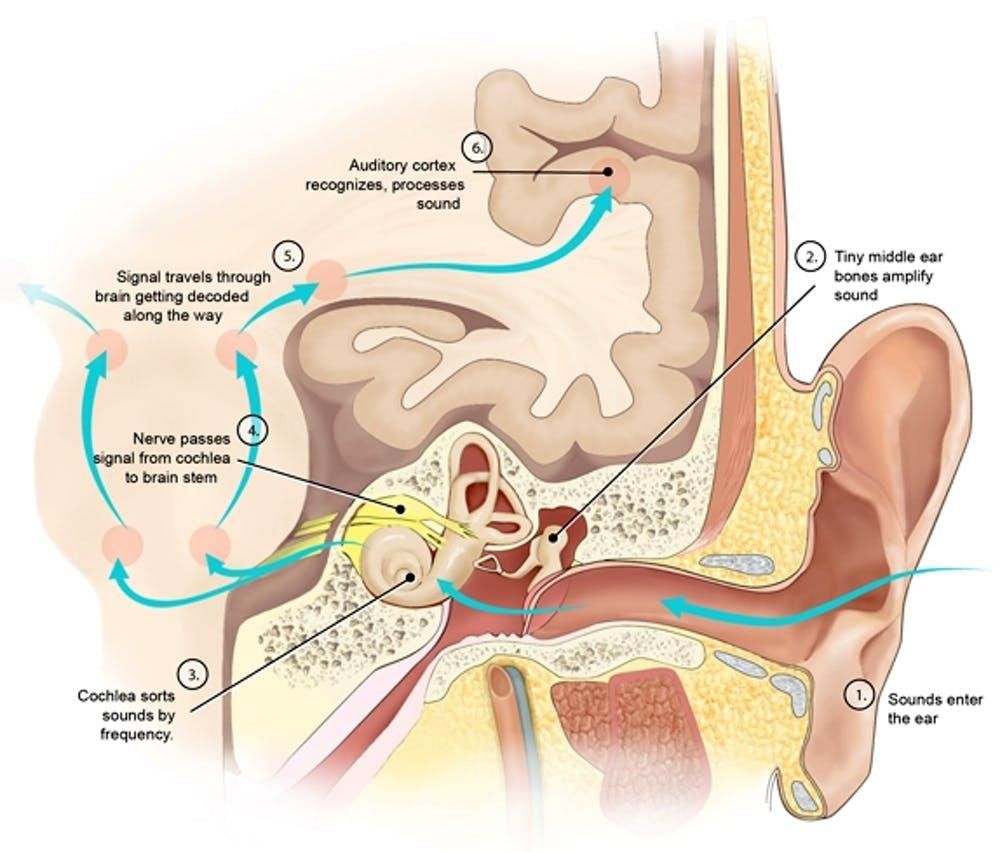

For many people with hearing difficulties, the problem isn’t in their ears – it’s in their brain. In everyday environments, sound waves emitted from every object around you mix together before they enter your ear. Your brain must then sort out which bits of sound belong to each source in the environment and correctly group these bits of sound together, ignoring some – like the hum of the refrigerator – and focusing on others, like a relative calling out from the next room.

This ability to distinguish, process and make sense of sound is one of the first things to break down in hearing loss from normal aging, or from neurological disorders like ADD/ADHD, autism and dyslexia. It’s so complex that for decades, auditory neuroscientists like me have been trying to understand how the brain does this, and how we can help people who have difficulty hearing in noisy surroundings.

Video games to the rescue

In their new study, Polley, Whitton and their colleagues created a video game to train players’ brains to distinguish sounds better. Players trace their fingers around a blank tablet screen, seeking to identify the edges of a hidden shape. They get continous auditory feedback on how they’re doing through headphones, which play sounds partially obscured by background noise. It works a bit like the “hotter or colder” children’s game: The only way to find the edges of the shape is to listen carefully to the sounds and notice how they change as they move their finger. As the player gets better at the game, the background noise gets louder, making the game more challenging.

To determine whether this video game could help people in their everyday lives, the researchers recruited 24 older adults with hearing loss. Half of the participants played the auditory training game. The other 12 played an equally challenging game in which they heard nonsense sentences (like “Ready Barron, go to green four now”) amid background noise. Those people had to remember, and later identify, which words they had heard in the sentences. Importantly, this memory task tested hearing, but differed from the video game training in that it did not test people’s ability to distinguish subtle differences in sounds.

After eight weeks of training on their respective games, in several sessions a week at home on a tablet, the memory group was no better at distinguishing speech from background noise. But the people who played the auditory video game were able to understand 25 percent more words and sentences in background noise, which was about three times more beneficial than from their hearing aids alone. This was particularly surprising because the video game group showed improvements in speech understanding, even though their training only involved non-verbal sounds.

Fast feedback

In conversations and interviews, Polley admits that he doesn’t know exactly why the game works, but he suspects that the structure of the game is the key: The brain is able to predict how the video game’s sound will change with each finger movement, and then gets immediate feedback about what actually happened.

This is the same sort of feedback that people receive during activities like sports and playing a musical instrument. For example, a violinist anticipates the next note of a piece, places her finger on the appropriate spot along the neck of the violin, and then listens to the sound of the resulting note and how it fits with the other instruments of the orchestra. If any pitch adjustments are needed, her finger almost immediately shifts to the correct spot. And she must do all of this while ignoring extraneous sounds, like the other melody in the woodwind section or the timpani drumroll.

There is some evidence that periods of intense musical training, especially in childhood, can lead to benefits that generalize to everyday communication. For example, my previous work examined the idea that musicians often outperform non-musicians on tests of speech understanding in background noise, and that musicians’ brains might process speech sounds more precisely than the brains of non-musicians.

But just like musical training, practice seems to be necessary for maintaining the ability to understand speech in noisy backgrounds. Two months after the video game training ended, the researchers tested the participants’ speech understanding abilities again, and found that the benefits of the video game had vanished.

A future with better hearing

Despite the remaining mysteries about how exactly this sound-based video game can improve speech perception, this preliminary result raises exciting possibilities for future clinical therapies. It also gives scientists like me further insight into how the brain learns new perceptual skills, by demonstrating that even short-term training can have a dramatic effect on ability to distinguish speech from background noise.

But what remains to be seen is which brain changes underlie these behavioral improvements. In my own research, I seek to answer that question by examining the brains of people who have undergone various types of training, observing how their brains process sound, and comparing them to people who have not undergone training. The hope is that we can learn more about how the brain changes in response to training, and how that relates to people’s perceptual abilities.

So although people should be cautious about claims about training our brains to improve our general intelligence, this study’s results from targeted perceptual training are encouraging. One day there might be an iPhone app that can help your mother-in-law follow the conversation at a crowded restaurant or a student with a learning disorder focus on the teacher’s voice. Scientists just need to figure out how to best train the brain to listen.

This article was originally published on The Conversation.

Dana Boebinger, Ph.D. student in Speech and Hearing Bioscience and Technology, Harvard University