Why Fire Makes Us Human

Cooking may be more than just a part of your daily routine, it may be what made your brain as powerful as it is

/https://tf-cmsv2-smithsonianmag-media.s3.amazonaws.com/filer/Mind-on-Fire-cooking-evolution-631.jpg)

Wherever humans have gone in the world, they have carried with them two things, language and fire. As they traveled through tropical forests they hoarded the precious embers of old fires and sheltered them from downpours. When they settled the barren Arctic, they took with them the memory of fire, and recreated it in stoneware vessels filled with animal fat. Darwin himself considered these the two most significant achievements of humanity. It is, of course, impossible to imagine a human society that does not have language, but—given the right climate and an adequacy of raw wild food—could there be a primitive tribe that survives without cooking? In fact, no such people have ever been found. Nor will they be, according to a provocative theory by Harvard biologist Richard Wrangham, who believes that fire is needed to fuel the organ that makes possible all the other products of culture, language included: the human brain.

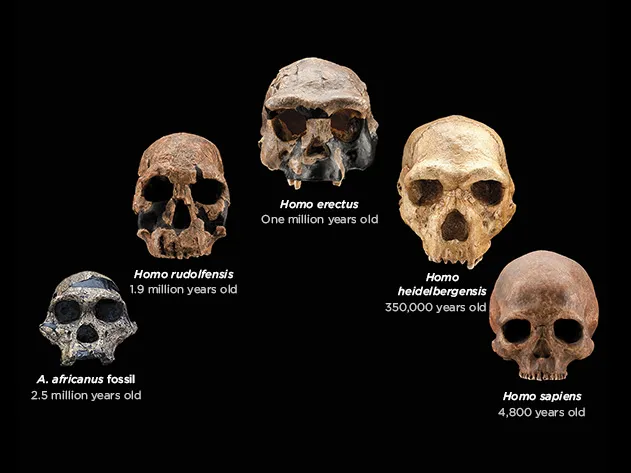

Every animal on earth is constrained by its energy budget; the calories obtained from food will stretch only so far. And for most human beings, most of the time, these calories are burned not at the gym, but invisibly, in powering the heart, the digestive system and especially the brain, in the silent work of moving molecules around within and among its 100 billion cells. A human body at rest devotes roughly one-fifth of its energy to the brain, regardless of whether it is thinking anything useful, or even thinking at all. Thus, the unprecedented increase in brain size that hominids embarked on around 1.8 million years ago had to be paid for with added calories either taken in or diverted from some other function in the body. Many anthropologists think the key breakthrough was adding meat to the diet. But Wrangham and his Harvard colleague Rachel Carmody think that’s only a part of what was going on in evolution at the time. What matters, they say, is not just how many calories you can put into your mouth, but what happens to the food once it gets there. How much useful energy does it provide, after subtracting the calories spent in chewing, swallowing and digesting? The real breakthrough, they argue, was cooking.

Wrangham, who is in his mid-60s, with an unlined face and a modest demeanor, has a fine pedigree as a primatologist, having studied chimpanzees with Jane Goodall at Gombe Stream National Park. In pursuing his research on primate nutrition he has sampled what wild monkeys and chimpanzees eat, and he finds it, by and large, repellent. The fruit of the Warburgia tree has a “hot taste” that “renders even a single fruit impossibly unpleasant for humans to ingest,” he writes from bitter experience. “But chimpanzees can eat a pile of these fruits and look eagerly for more.” Although he avoids red meat ordinarily, he ate raw goat to prove a theory that chimps combine meat with tree leaves in their mouths to facilitate chewing and swallowing. The leaves, he found, provide traction for the teeth on the slippery, rubbery surface of raw muscle.

Food is a subject on which most people have strong opinions, and Wrangham mostly excuses himself from the moral, political and aesthetic debates it provokes. Impeccably lean himself, he acknowledges blandly that some people will gain weight on the same diet that leaves others thin. “Life can be unfair,” he writes in his 2010 book Catching Fire, and his shrug is almost palpable on the page. He takes no position on the philosophical arguments for and against a raw-food diet, except to point out that it can be quite dangerous for young children. For healthy adults, it’s “a terrific way to lose weight.”

Which is, in a way, his point: Human beings evolved to eat cooked food. It is literally possible to starve to death even while filling one’s stomach with raw food. In the wild, people typically survive only a few months without cooking, even if they can obtain meat. Wrangham cites evidence that urban raw-foodists, despite year-round access to bananas, nuts and other high-quality agricultural products, as well as juicers, blenders and dehydrators, are often underweight. Of course, they may consider this desirable, but Wrangham considers it alarming that in one study half the women were malnourished to the point they stopped menstruating. They presumably are eating all they want, and may even be consuming what appears to be an adequate number of calories, based on standard USDA tables. There is growing evidence that these overstate, sometimes to a considerable degree, the energy that the body extracts from whole raw foods. Carmody explains that only a fraction of the calories in raw starch and protein are absorbed by the body directly via the small intestine. The remainder passes into the large bowel, where it is broken down by that organ’s ravenous population of microbes, which consume the lion’s share for themselves. Cooked food, by contrast, is mostly digested by the time it enters the colon; for the same amount of calories ingested, the body gets roughly 30 percent more energy from cooked oat, wheat or potato starch as compared to raw, and as much as 78 percent from the protein in an egg. In Carmody’s experiments, animals given cooked food gain more weight than animals fed the same amount of raw food. And once they’ve been fed on cooked food, mice, at least, seemed to prefer it.

In essence, cooking—including not only heat but also mechanical processes such as chopping and grinding—outsources some of the body’s work of digestion so that more energy is extracted from food and less expended in processing it. Cooking breaks down collagen, the connective tissue in meat, and softens the cell walls of plants to release their stores of starch and fat. The calories to fuel the bigger brains of successive species of hominids came at the expense of the energy-intensive tissue in the gut, which was shrinking at the same time—you can actually see how the barrel-shaped trunk of the apes morphed into the comparatively narrow-waisted Homo sapiens. Cooking freed up time, as well; the great apes spend four to seven hours a day just chewing, not an activity that prioritizes the intellect.

The trade-off between the gut and the brain is the key insight of the “expensive tissue hypothesis,” proposed by Leslie Aiello and Peter Wheeler in 1995. Wrangham credits this with inspiring his own thinking—except that Aiello and Wheeler identified meat-eating as the driver of human evolution, while Wrangham emphasizes cooking. “What could be more human,” he asks, “than the use of fire?”

Unsurprisingly, Wrangham’s theory appeals to people in the food world. “I’m persuaded by it,” says Michael Pollan, author of Cooked, whose opening chapter is set in the sweltering, greasy cookhouse of a whole-hog barbecue joint in North Carolina, which he sets in counterpoint to lunch with Wrangham at the Harvard Faculty Club, where they each ate a salad. “Claude Lévi-Strauss, Brillat-Savarin treated cooking as a metaphor for culture,” Pollan muses, “but if Wrangham is right, it’s not a metaphor, it’s a precondition.” (Read about what it's like to have dinner with Pollan)

Wrangham, with his hard-won experience in eating like a chimpanzee, tends to assume that—with some exceptions such as fruit—cooked food tastes better than raw. But is this an innate mammalian preference, or just a human adaptation? Harold McGee, author of the definitive On Food and Cooking, thinks there’s an inherent appeal in the taste of cooked food, especially so-called Maillard compounds. These are the aromatic products of the reaction of amino acids and carbohydrates in the presence of heat, responsible for the tastes of coffee and bread and the tasty brown crust on a roast. “When you cook food you make its chemical composition more complex,” McGee says. “What’s the most complex natural, uncooked food? Fruit, which is produced by plants specifically to appeal to animals. I used to think it would be interesting to know if humans are the only animals that prefer cooked food, and now we’re finding out it’s a very basic preference.”

Among Wrangham’s professional peers, his theory elicits skepticism, mainly because it implies that fire was mastered around the time Homo erectus appeared, roughly 1.8 million years ago. Until recently, the earliest human hearths were dated to about 250,000 B.C.; last year, however, the discovery of charred bone and primitive stone tools in a cave in South Africa tentatively pushed the time back to roughly one million years ago, closer to what Wrangham’s hypothesis demands but still short. He acknowledges that this is a problem for his theory. But the number of sites dating from that early period is small, and the evidence of fire might not have been preserved. Future excavations, he hopes, will settle the issue.

In Wrangham’s view, fire did much more than put a nice brown crust on a haunch of antelope. Fire detoxifies some foods that are poisonous when eaten raw, and it kills parasites and bacteria. Again, this comes down to the energy budget. Animals eat raw food without getting sick because their digestive and immune systems have evolved the appropriate defenses. Presumably the ancestors of Homo erectus—say, Australopithecus—did as well. But anything the body does, even on a molecular level, takes energy; by getting the same results from burning wood, human beings can put those calories to better use in their brains. Fire, by keeping people warm at night, made fur unnecessary, and without fur hominids could run farther and faster after prey without overheating. Fire brought hominids out of the trees; by frightening away nocturnal predators, it enabled Homo erectus to sleep safely on the ground, which was part of the process by which bipedalism (and perhaps mind-expanding dreaming) evolved. By bringing people together at one place and time to eat, fire laid the groundwork for pair bonding and, indeed, for human society.

We will now, in the spirit of impartiality, acknowledge all the ways in which cooking is a terrible idea. The demand for firewood has denuded forests. As Bee Wilson notes in her new book, Consider the Fork, the average open cooking fire generates as much carbon dioxide as a car. Indoor smoke from cooking causes breathing problems, and heterocyclic amines from grilling or roasting meat are carcinogenic. Who knows how many people are burned or scalded, or cut by cooking utensils, or die in cooking-related house fires? How many valuable nutrients are washed down the sink along with the water in which vegetables were boiled? Cooking has given the world junk food, 17-course tasting menus at restaurants where you have to be a movie star to get a reservation, and obnoxious, overbearing chefs berating their sous-chefs on reality TV shows. Wouldn’t the world be a better place without all that?

Raw-food advocates are perfectly justified in eating what makes them feel healthy or morally superior, but they make a category error when they presume that what nourished Australopithecus should be good enough for Homo sapiens. We are, of course, animals, but that doesn’t mean we have to eat like one. In taming fire, we set off on our own evolutionary path, and there is no turning back. We are the cooking animal.